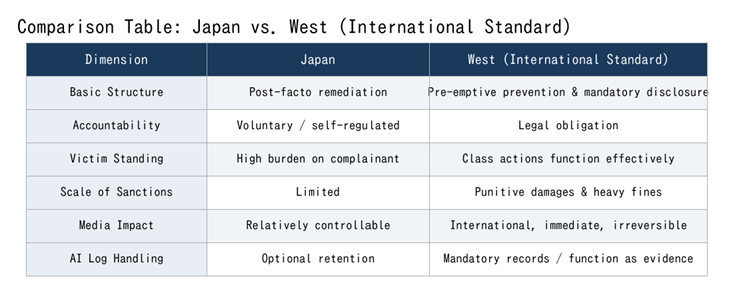

Legal Structure: Japan vs. International

Japan’s legal system is fundamentally reactive — victims must come forward after harm occurs, administrative action is slow, and corporate accountability is largely voluntary. This structure has historically allowed problems to be “handled internally.”

International legal systems, particularly in the EU and US, are proactive and disclosure-mandatory. Companies must prepare risk assessments, technical documentation, and audit records before problems occur — and disclose them to regulators and consumers. When harm does occur, class action suits, punitive damages, and regulatory sanctions proceed in parallel.

Comparison Table:

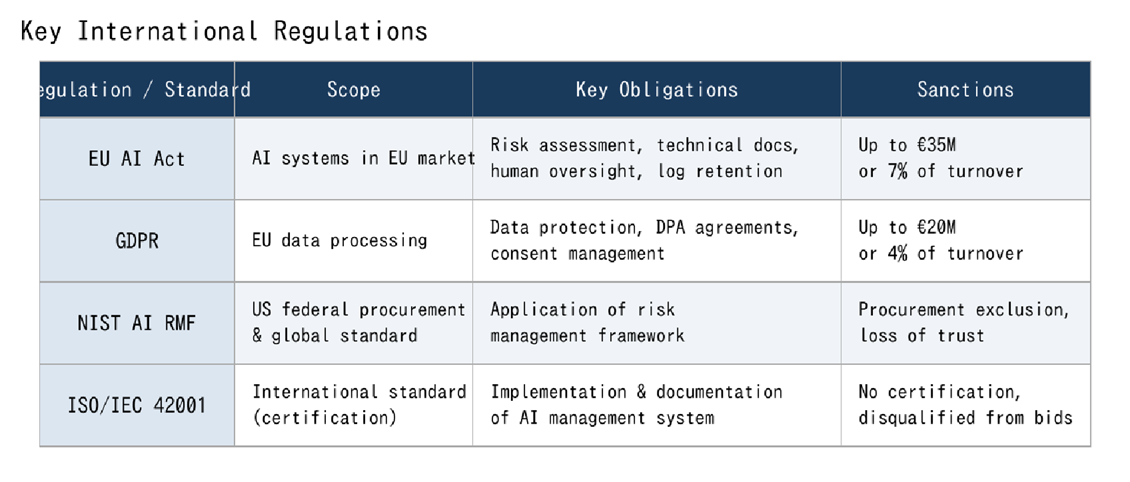

EU AI Act & International Regulatory Comparison

The EU AI Act, enacted in 2024, is the world’s first comprehensive AI regulation — classifying AI systems by risk level and imposing legal obligations on high-risk categories (employment, healthcare, education, critical infrastructure, etc.).

Japanese companies providing AI solutions in EU markets, or processing EU data, fall within its scope. The assumption that “we built it in Japan, so it doesn’t apply” is incorrect. Penalties reach up to €35,000,000 or 7% of global annual turnover.

Key International Regulations

Glossary of Corporate AI Liability

Essential legal concepts for understanding international AI accountability.

Accountability

The obligation of AI developers, operators, and users to explain the decisions, behaviors, and impacts of AI systems — including the ability to trace and answer: “Who decided what, and on what basis?”

EQAI embeds accountability directly at the conversation layer.

Transparency

The requirement that AI systems disclose how they function and what decision processes they follow in a form understandable to humans. The EU AI Act mandates transparency for high-risk AI systems.

Human Oversight

The requirement to maintain mechanisms by which humans can monitor, intervene in, and correct AI system behaviour. This is a core requirement of the EU AI Act — a safeguard preventing AI from making critical decisions autonomously.

Punitive Damages

Damages awarded in excess of actual harm, designed to punish wrongdoing and deter future misconduct. Widely applied in US law — multipliers of actual damages are common for intentional or grossly negligent acts. Japan has no equivalent mechanism.

Class Action

A legal mechanism allowing large numbers of plaintiffs who suffered the same harm to sue collectively. AI-related class actions related to discrimination, privacy violations, and unjust algorithmic decisions are surging in the West. Japan has a limited equivalent.

Data Processing Agreement / DPA

A contract mandated by GDPR between data controllers and processors. Processing EU personal data without a DPA in place is illegal and immediately subject to sanctions.

International Standards for Accountability & Transparency

The international community is rapidly standardising AI governance. For Japanese companies to earn trust in global markets, they must not merely know these standards — they must demonstrate active implementation.

Key Standards

Risk-based classification of AI systems into four tiers. High-risk systems require conformity assessments, technical documentation, logging, human oversight, and transparency measures — the baseline for Japanese companies entering EU markets.

NIST AI Risk Management Framework(NIST AI RMF)

A comprehensive AI risk management framework from the US National Institute of Standards and Technology. Structured around four functions: Govern, Map, Measure, Manage — widely adopted as a global standard and a de facto requirement for US federal procurement.

ISO/IEC 42001

The international standard for AI Management Systems — equivalent in stature to ISO 9001 (quality) and ISO 27001 (information security). It demands implementation, documentation, and continuous improvement of AI governance. Increasingly required in international tenders and partnership negotiations.

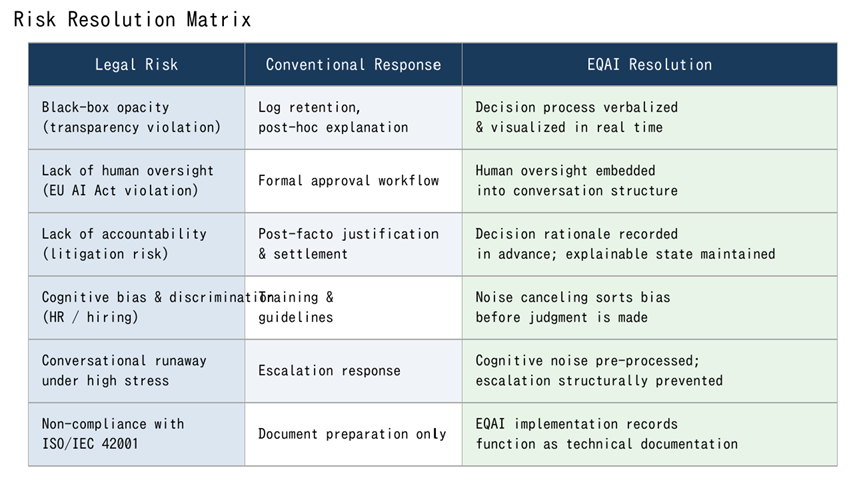

How EQAI Addresses Legal Risk

EQAI translates the requirements of international AI regulation into implementable structures at the conversation layer. Beyond technical compliance, it makes organisational decision-making processes themselves explainable — structurally reducing legal exposure.

Risk Resolution Matrix

EQAI is not a compliance tool.

It is the foundation for honest dialogue between humans and AI.

And honesty, by nature, aligns with international standards.