EQAI™︎ Ethical Framework

The Missing Layer in AI Governance

Ethical OS for the AI Age

A governance framework designed to preserve

human judgment, emotion, and clarity

in the age of artificial intelligence.

So that compliance becomes meaningful,

and human dignity is protected by design.

The most advanced AI system is still only as trustworthy as the values it was built on.

Regulations like the EU AI Act, NIST AI RMF, and ISO/IEC 42001 define what AI must not do.

EQAI defines what AI must ultimately serve:

human dignity, human judgment, and human freedom.

THE PROBLEM WE’RE SOLVING

AI Governance Is Missing Its Human Core

Current AI regulations address risk. They define what AI must not do.

But few frameworks address what AI should fundamentally serve.

When AI systems make decisions that affect people’s livelihoods, safety, and sense of self — the question is not only “Is this legal?”

It is: “Does this uphold human dignity?”

That gap exists inside every organization deploying AI today.

It exists in the space between your governance policy and how your people actually decide.

EQAI closes that gap.

WHAT EQAI IS

The Layer That Makes Compliance Meaningful

When AI systems make decisions that affect people’s livelihoods, safety, and sense of self —the question is not only “Is this legal?”

It is: “Does this uphold human dignity?”

That gap exists inside every organization deploying AI today.

It exists in the space between your governance policy and how your people actually decide.

EQAI closes that gap.

EQAI — Emotional & Ethical Intelligence for AI —

is a governance framework developed in Japan that integrates EQ principles into AI design, deployment, and organizational decision-making.

Most compliance frameworks tell you what your AI must not do.

EQAI gives your organization the operational layer to ensure what it does do reflects genuine human judgment, accountability, and care.

It is not a replacement for existing regulations.

It is the layer that makes compliance meaningful.

EQAI Interaction Model

Human

↓

EQAI Interface

↓

Reflection Prompt

↓

Emotional Awareness

↓

AI Assistance

↓

Human Decision

The EQAI interaction model supports reflective engagement between humans and artificial intelligence.

Instead of accelerating automatic responses, EQAI introduces moments of reflection and emotional awareness before decisions are made.

Current Prototype

Three core principles guide EQAI:

1. Human Dignity First

Every AI interaction is an encounter with a person.

EQAI ensures that encounter is designed with respect, not just efficiency.

2. Transparency as Trust

People have the right to understand decisions that affect them.

EQAI builds accountability structures that make AI legible to humans — not just auditors.

3. Peaceful Resolution by Design

Conflict arises when people feel unheard, dismissed, or reduced to data points.

EQAI embeds emotional awareness into AI systems to de-escalate rather than inflame.

WHO THIS IS FOR

Built for Those Who Carry Real Accountability

For AI Builders & Tech Teams:

You are building systems that will touch millions of lives.

The technical decisions you make today will shape human experience for decades.

EQAI gives you a framework to ask — and answer — the harder questions before deployment, not after.

Not as a constraint on what you build. As a foundation for why it can be trusted.

For Policy Makers & Regulators:

The EU AI Act, GDPR, NIST AI RMF, and emerging global standards demand transparency and accountability.

But regulation without operationalization remains aspirational.

EQAI bridges the gap between legal compliance and lived experience — giving organizations the human-layer infrastructure that regulators are increasingly asking to see.

For Corporate ESG & Governance Teams:

Human rights due diligence is no longer optional.

As AI becomes central to business operations, your ESG responsibility now includes how your AI treats people — not just what data it collects, but how it shapes decisions that affect human dignity.

EQAI provides the framework and documentation to demonstrate that commitment with substance, not just statements.

WHY NOW

The Window to Get This Right Is Open — But Not Forever

We are in the foundational years of AI governance.

The frameworks being adopted today will shape how AI affects human life for decades to come.

EQAI was developed with urgency and care — born from direct observation of what happens when AI systems operate without an emotional or ethical compass.

The cost of waiting is not abstract.

It is measured in decisions made without empathy, in conflicts that could have been prevented, in trust that, once broken, is very hard to rebuild.

ALIGNMENT WITH GLOBAL STANDARDS

Designed to Work With the Frameworks You Already Know

EQAI is not built in isolation. It is designed to complement and strengthen:

- EU AI Act — Rights-based, human-centered compliance

EQAI’s dialogue protocols directly support Article 14 human oversight requirements.

- GDPR — Transparency and individual dignity in data use

EQAI reinforces Article 22 obligations around automated decision-making.

- NIST AI RMF — Risk management with a human values layer

EQAI maps to Govern, Map, Measure, and Manage — operationalizing the RMF’s human-centric principles.

- ISO/IEC 42001 — AI management systems grounded in accountability

EQAI provides the human-layer documentation that AI Management System certification requires.

- UN Guiding Principles on Business and Human Rights — ESG alignment at the AI layer

CLOSING STATEMENT

Technology Should Mature the Way Humans Do — With Wisdom, Not Just Intelligence

EQAI is not a checklist.

It is a commitment to building AI that reflects the best of human judgment —

thoughtful, dignified, and oriented toward peace.

We invite builders, regulators, and organizations who share this vision to explore the framework, engage with our research, and help shape what ethical AI looks like in practice.

About the project

Founded by Mitsuko Taguchi .

EQAI was not designed in a research lab.

It was built from lived experience at the intersection of human organizations and AI.

With expertise in EU AI Act, NIST AI RMF, ISO/IEC 42001, and cross-cultural compliance frameworks.

This project believes in careful presence over visibility —stepping forward when it matters, and stepping back when it doesn’t.

“Dignity by Design.”

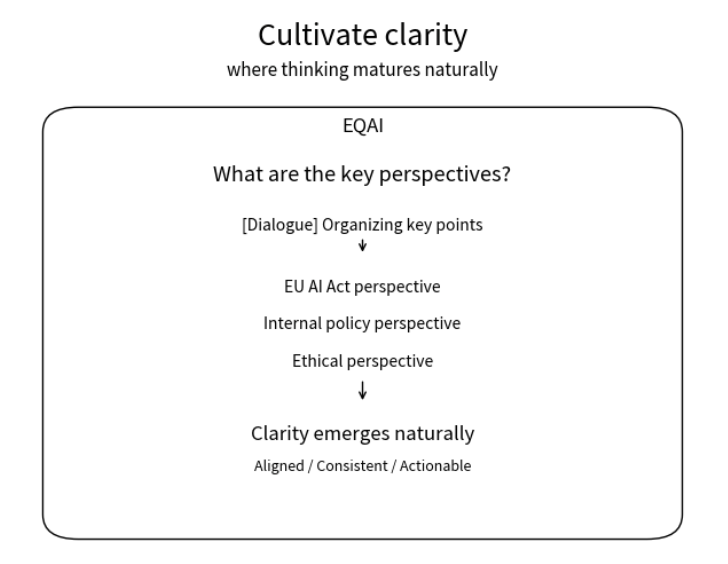

Conceptual Diagram

For organizations navigating complex decisions, EQAI supports clarity, reflection, and responsible action.